Smarter decisions at the speed of collisions

Machine learning is reshaping how ATLAS and CMS filter collisions in real time

Written by:

Piotr Traczyk

—

Smart and fast decision making is key when dealing with the onslaught of collisions at the LHC. At the High-Luminosity LHC (HiLumi LHC), the ATLAS and CMS experiments are expected to process detector data at rates corresponding to roughly a quarter of the 2025 global internet traffic. All in real time, as part of the first stages of event selection.

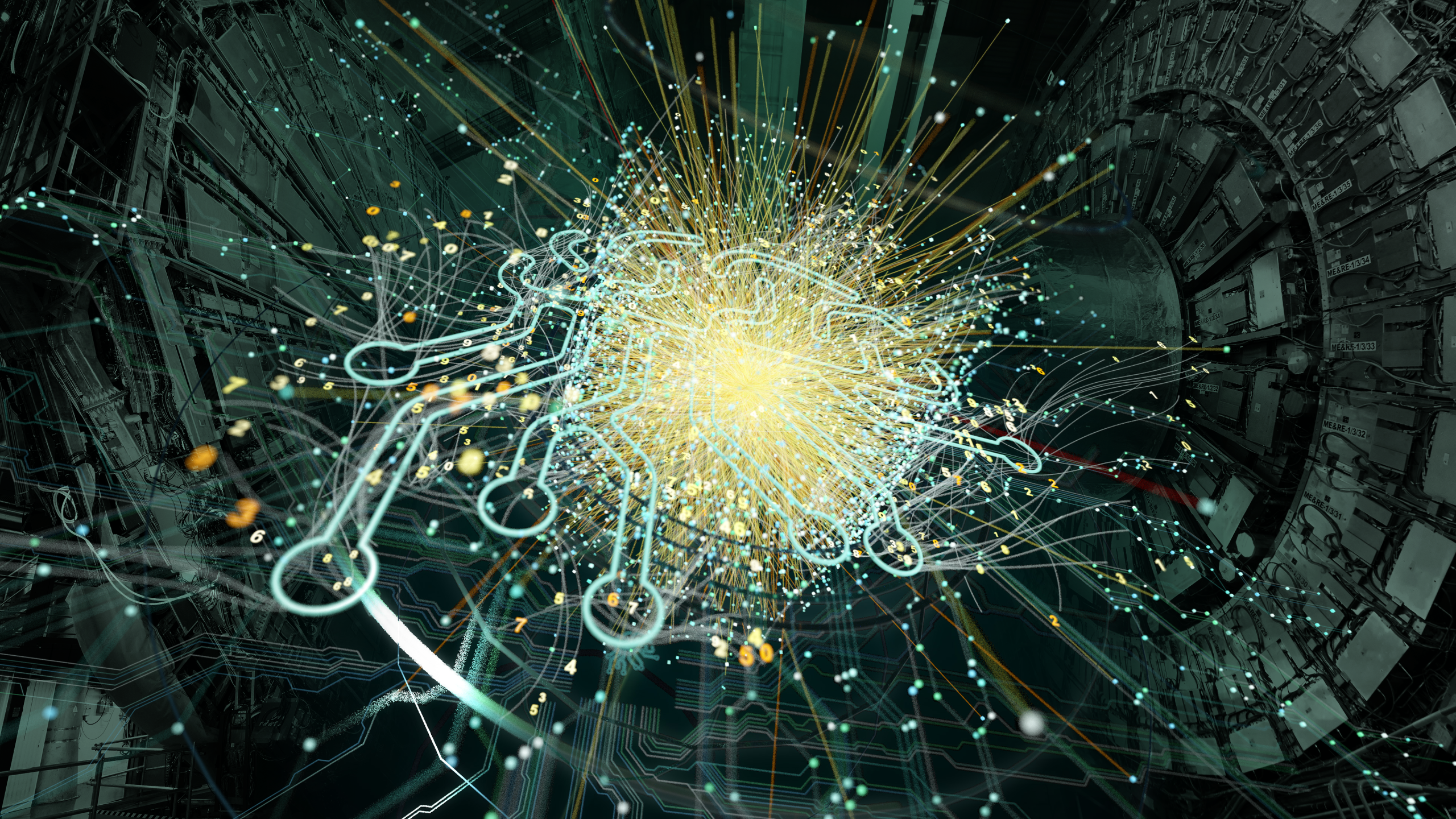

Each second, billions of protons crash into one another at the LHC’s four collision points, generating a data volume so vast that it is not possible to store it in its entirety. Instead, the data has to be filtered in real time by so-called “trigger systems”. Dedicated algorithms estimate which collision events are potentially interesting, based on predefined characteristics, enabling about one in every 20 000 events to be read out and stored for further analysis.

In the quest to find cracks in the Standard Model of particle physics, or new phenomena entirely, researchers at the LHC’s CMS and ATLAS experiments are building smarter and computationally more powerful trigger systems, capable of exploiting more data in real time, into their detectors. Recently, solutions based on AI and machine learning have been employed to boost the physics reach of their triggers, opening a powerful new avenue for identifying potentially interesting or anomalous events.

Particle physicists were early adopters of neural networks and have been using machine-learning algorithms for data analysis since the 1990s. Until now, such tools have primarily been used to help identify traces left by particles in the detectors and to categorise the underlying physics processes. These methods have already managed to push the performance of data analysis significantly beyond what was envisioned when the LHC was starting up, allowing CMS and ATLAS to measure key processes – especially those associated with the Higgs boson – much sooner than expected.

But machine learning does more than improve performance: it opens the door to entirely new approaches to discovering unknown phenomena. One example is unsupervised anomaly detection. Instead of targeting specific particles or processes predicted by the Standard Model, this technique searches for any kind of disagreement between data and theory. These algorithms are trained on randomly selected LHC collisions, teaching them to encode “standard” events seen by the detectors, so that physicists can select potentially interesting events in an unbiased way.

“This is a game-changer for particle physics because it allows us to scour the LHC data for new phenomena without pre-judging what those phenomena might look like,” says Maurizio Pierini of CMS. “This is essential as we move into a precision era at the LHC and continue to squeeze the possible hiding places for new physics.”

If this technique is to be fully exploited, however, it cannot be limited to the small fraction of data selected by the CMS and ATLAS trigger systems. For truly unbiased anomaly detection, the algorithm must already be applied at the trigger level in order to avoid the risk of the trigger algorithms removing potentially interesting events before the analysis has a chance to find them. This presents a major challenge because the trigger system has to make a decision every time a new collision happens: 40 million times per second, or once every 25 nanoseconds. At such speeds, there is no time to run computationally intensive machine-learning algorithms. Or is there?

In 2018 CMS researchers developed an open source tool that translates machine-learning algorithms into the language (firmware) that controls field-programmable gate arrays (FPGAs). These are the custom programmable electronics used to take ultra-quick decisions in the first step of event selection, which is called the level-1 trigger. The team then developed strategies for “compressing” the algorithms, adapting them for implementation in the level-1 trigger electronics without significantly reducing their performance.

The ATLAS and CMS experiments are already implementing this approach in the level-1 trigger during data taking. This is providing researchers, for the first time, with a dataset to analyse that’s based on the new approach to triggering.

“Anomaly detection triggers are very different from our conventional triggers at the LHC and using them for a potential discovery will require us to develop entirely new data analysis techniques,” says Dylan Rankin from ATLAS. “These first datasets we are collecting at ATLAS and CMS are critical for understanding how to do this. The lessons we are learning are also vital for improving our models and techniques for future trigger development.”

Meanwhile, more advanced approaches are being developed, both within the experiments themselves and in the framework of the Next-Generation Triggers project. Launched in January 2024 as a collaboration between CERN’s Experimental Physics, Theoretical Physics and IT Departments, together with the ATLAS and CMS experiments, the five-year project has taken on much of the R&D effort. Largely driven by early-career researchers, it targets the challenges of the future High-Luminosity LHC, which is scheduled to begin operation in 2030. The primary aim is to extract more physics information from the vastly increased data volumes by improving the selection of the most relevant collision events while efficiently rejecting background. These advances are central to enhancing the sensitivity of the experiments and, ultimately, to increasing the chances of uncovering previously unseen phenomena. To achieve this, they combine modern AI and machine-learning techniques with specialised hardware such as FPGAs, supported by tools such as hls4ml for deploying machine-learning models directly on trigger electronics, while also refining both guidance from theory and analysis tools for the study of ultra-rare events.

Together, these developments aim to ensure that, even at the extreme data rates of the High‑Luminosity LHC, potentially revolutionary signals can be identified rather than lost in the flood of collisions.